‘How will AR and VR transform 5G?’ - Henry Tirri, CTO at InterDigital

Augmented Reality technology will push the boundaries of connectivity and drive innovation in a 5G world.

Get up to speed with 5G, and discover the latest deals, news, and insight!

You are now subscribed

Your newsletter sign-up was successful

Let’s take a glimpse into the future. You're in your car on your way to a meeting. The car is equipped with an advanced driver assistance system (ADAS). There's a turn up ahead, but you're in an unfamiliar neighborhood and the street signs aren't easy to read.

You aren't sure if you're supposed to turn off this main road at this intersection or the one just past it. Fortunately, the ADAS is a step ahead of you: the street information is superimposed into your visual field as you approach the turn. It changes the picture you see as you approach the decision point, clearly showing your navigational path.

Now that you made the turn off the main street and are looking for the building number of your destination, the building information appears in your visual field, even though the actual building number is partially obscured by a tree. The system finds an available parking space nearest to the door you need to enter and navigates you directly to it. You arrive at your meeting on time, having avoided the stress of making a wrong turn, or having to find space in a crowded parking lot.

Augmenting our reality

"I’d argue the technology – the augmentation and virtualization of our reality – is exciting."

Henry Tirri, CTO at InterDigital

While the activity this system facilitates is mundane, I’d argue the technology – the augmentation and virtualization of our reality – is exciting. These evolutions have been envisioned in the science fiction realm for more than a quarter century already. William Gibson’s descriptions of “cyberspace” in his 1984 novel Neuromancer are among the earliest known popular references to these virtual worlds. Michael Crichton's 1994 novel Disclosure (and the film of the same name, released later that year) contains a scene where the main character searches through a secure computer file system via virtual reality (VR) glasses. Long a staple of sci-fi films, VR technology plays a central role in Tron (1989), The Matrix (1999) and several other films. Now in 2019, virtual and augmented reality technology is finally approaching widespread use.

Yet as immersive and captivating as VR is, there are likely to be far more real-world applications for a more “mobile” augmented reality (AR) technology. The fully immersive VR experience is – at least for now – best when confined to a specific space, like a laboratory, classroom or gaming room; that is, VR operates in a “closed world”. On the other hand, AR can begin to more seamlessly integrate with our daily life – the “open world” - because it combines important bits of supplemental visual information with our perception of the physical world around us.

Connectivity is the defining, and sometimes limiting, factor of this augmented reality. Augmenting our reality requires mobility because we are constantly on the go. Connectivity is one of the main reasons why this technology that's been envisioned for more than 30 years hasn't yet made it into mainstream use. There's simply too much data to be encoded, transported and delivered to our devices for existing network technology to handle it at scale. This is why we're going to need 5G – and future wireless networks – to make AR (and any kind of mobile VR) a practical reality.

How will AR and VR transform 5G?

Some people might ask the question "How will 5G transform AR and VR?" To us at InterDigital, the more interesting angle is to look at its inverse: How will AR and VR transform 5G? We'll return to our ADAS example to illustrate. But first, a quick look back to AR in 4G.

Get up to speed with 5G, and discover the latest deals, news, and insight!

The most well-known AR applications thus far have been games: especially the wildly popular Pokémon Go and the recent Harry Potter: Wizards Unite. These have been possible in a 4G network environment because – as exciting and compelling as the games are – the data delivered through the game is relatively compact. There are a fixed (and relatively static) number of game scenarios and challenges. The game itself can work within the constraints of 4G in large part because there isn't much data to deliver, and a lot of the intensive computing can be done in the cloud. Users of these games can tolerate a fair amount of latency, and the user is generally only traveling along at walking speed. Catching a Pokémon or fending off a dementor attack is fun in part because you're on foot when you do it.

However, most of the background technology will have to change when AR applications get to the level of future ADAS systems. When a car is traveling along at freeway speeds, an ADAS system will need to be capable both of serving up relevant information like street signs, building numbers, and intersection images, which are all relatively static, as well as more dynamic data, like notifications about traffic, weather and road conditions, and other travel warnings.

The ADAS of the future will also need to deliver that information from a variety of viewing angles in any given location where the car is located. Depending on how that application is coded, this kind of ADAS will require a huge amount of computing power and bandwidth. Some of the computing will be able to be done onboard the vehicle, but much of it will need to be done at the network edge as well, where computing horsepower and energy are more plentiful. Besides, it makes more sense for street maps and visual information to be stored close to the actual device where it will be used. If a car is driving in Los Angeles, there's no need (and probably insufficient storage anyway) for the Chicago, New York and Seattle maps to be onboard that car. The application data is thus not going to be replicated in every car in exactly the same way.

To understand the bandwidth needs for this future, consider the bandwidth needs for just this system alone. To do so, we must multiply the amount of ADAS computing power by the many thousands of cars in each given city. Not only do we need a bigger pipe for all of that data, we need edge servers to deliver the right data with the lowest possible latency (much more critical in a fast-moving vehicle), and those networks need software-defined slices that provision all of these resources for this particular type of application. A 5G ecosystem is better suited to meet these high volume, low latency network needs. 4G – designed primarily for a mobile voice, data and video user – just isn't built that way.

The major difference of 5G

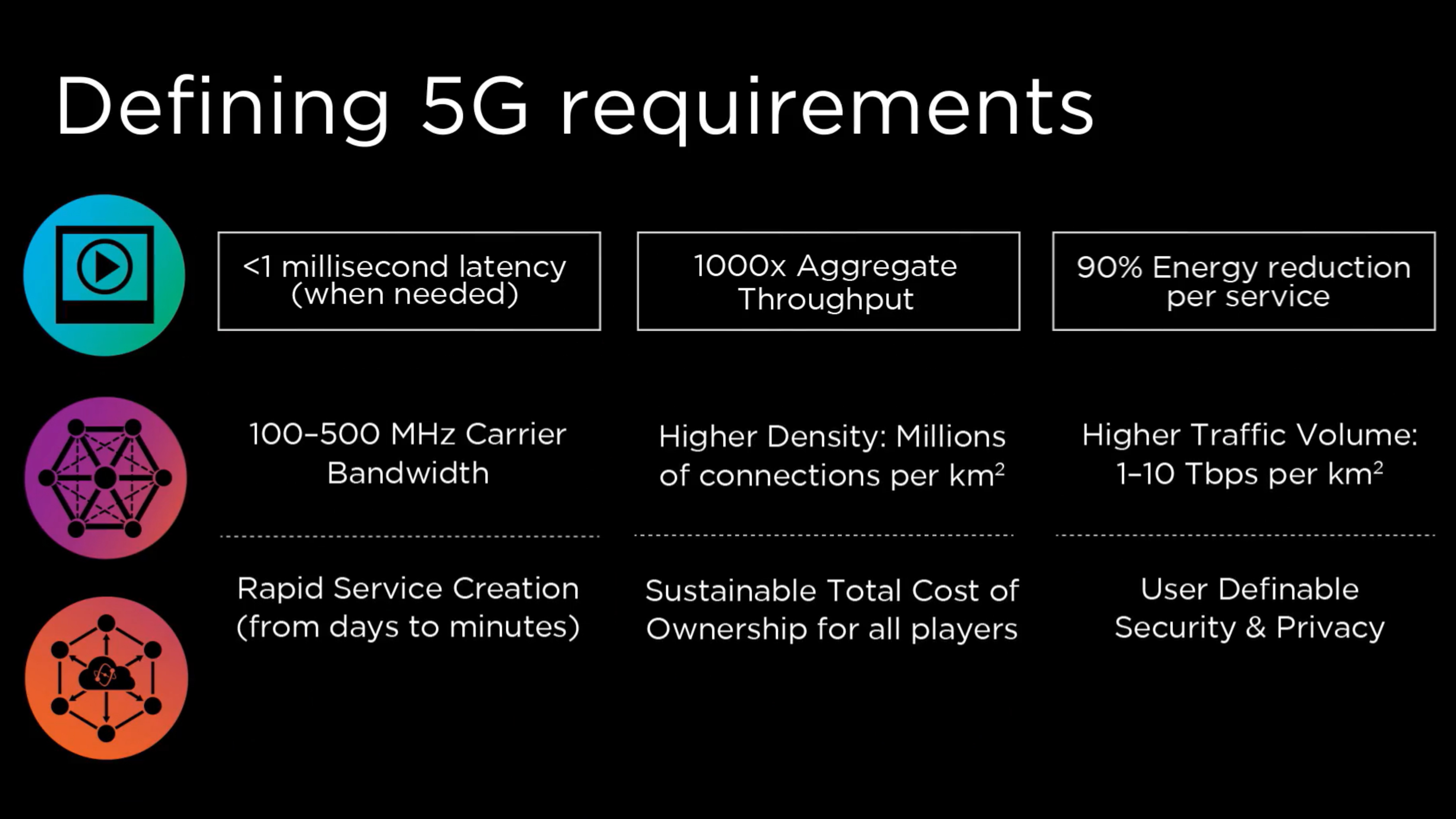

A major part of the reason why 5G architecture is so fundamentally different from its predecessors is because of the vast array of new use cases that are coming into focus. Augmented reality is just one of them.

Not all of the AR applications we'll see in the future will be as complex as the ADAS example I described above. Other applications, like simple search queries, will use the same infrastructure but will demand fewer resources from it. With AR, search capabilities will become a greater enhancement to our lives. We will be able to compare bargains using AR-driven search at the grocery store or even search statistics about an opposing team in the same visual field in which we watch a football game. AR will become a much more pervasive technology, in the way mobile phones and text messages have become a facet of everyday life for so many of us.

AR technology, enabled by 5G, will by no means be confined to the realm of the automobile or search. Other application domains will include augmented reality tele-medicine, “augmented shopping” (including the virtual fitting of clothing and accessories), augmented reality repair or installation of equipment, augmented reality tourism and travel guides, and many more.

Someday this technology will become so commonplace that we'll have trouble remembering life before it. And 5G will be the connectivity technology that made that ubiquity possible.

- All you need to know about 5G internet, and whether it'll replace cable

- We reveal how 5G technology works, and is set to transform the planet

- Experts are already looking to 2030, and here's what to expect from 6G

- Discover how 5G and Wi-Fi 6 will transform networking

Henry Tirri is InterDigital’s Chief Technology Officer and EVP for Research and Development, responsible for leading the company’s technology vision and strategy and advancing the company’s technology roadmaps. An executive with deep experience in a variety of technology areas such as artificial intelligence, automotive and data analytics, Dr. Tirri works closely with other executives to partner with internal R&D efforts, external investments, and corporate development in executing the company’s vision.